Finalist for the Best Robotic Vision Paper

at the 2017 IEEE/RAS International Conference on Robotics and Automation

- 01 June 2017

- Singapore

- Autonomous Motion

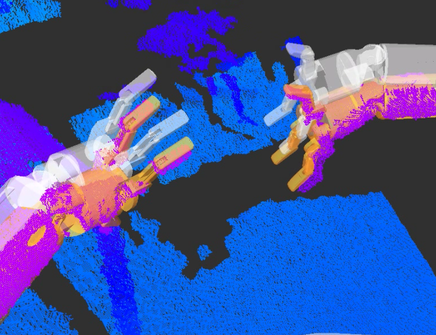

The paper "Probabilistic Articulated Real-Time Tracking for Robot Manipulation" by Cristina Garcia Cifuentes, Jan Issac, Manuel Wüthrich, Stefan Schaal and Jeannette Bohg was finalist for the Best Robotic Vision paper at the 2017 IEEE/RAS International Conference on Robotics and Automation.

The paper proposes a probabilistic filtering method which fuses joint measurements with depth images to yield a precise, real-time estimate of the end-effector pose in the camera frame. This method in combination with our previous work on object tracking endows a robust with robust hand-eye coordination.

Along with the paper, we release open-source code on Bayesian Object Tracking and data sets annotated with ground truth on rigid or articulated object tracking.