Departments

Our goal is to understand the principles of Perception, Action and Learning in autonomous systems that successfully interact with complex environments and to use this understanding to design future artificially intelligent systems. The Institute studies these principles in biological, computational, hybrid, and material systems ranging from nano to macro scales. We take a highly interdisciplinary approach that combines mathematics, computation, materials science, and biology.

The Max Planck Institute for Intelligent Systems has campuses in Stuttgart and Tübingen. The institute combines – within one center – theory, software, and hardware expertise in the research field of intelligent systems. The Tübingen campus focuses on how intelligent systems process information to perceive, act and learn through research in the areas of machine learning, computer vision, and human-scale robotics. Our Stuttgart campus has world-leading expertise in micro- and nano-robotic systems, haptic perception, human-robot interaction, robotic materials,, bio-hybrid systems, and medical robotics.

Empirical Inference

Bernhard Schölkopf

The members of the Empirical Inference department The department’s research highlights include: are dedicated to machine learning and causal inference. They develop algorithms that independently recognize regularities in data and draw conclusions from them. The researchers’ primary goal is to understand how living beings and artificial systems recognize structures in order to act in the world. They aim to contribute to the application of theoretical methods of machine learning, for instance in medicine or astronomy.

Haptic Intelligence

Katherine J. Kuchenbecker

The Haptic Intelligence department aims to advance the scientific understanding of haptic interaction while simultaneously inventing human-computer and human-robot systems that take advantage of the unique capabilities of the sense of touch. The department pursues this goal by undertaking research in four main fields:

- understanding tactile contact during physical interactions by both humans and robots,

- creating and characterizing haptic interface technology,

- advancing and evaluating teleoperation interfaces, and

- designing contact-based human-robot interaction systems.

Modern Magnetic Systems

Gisela Schütz

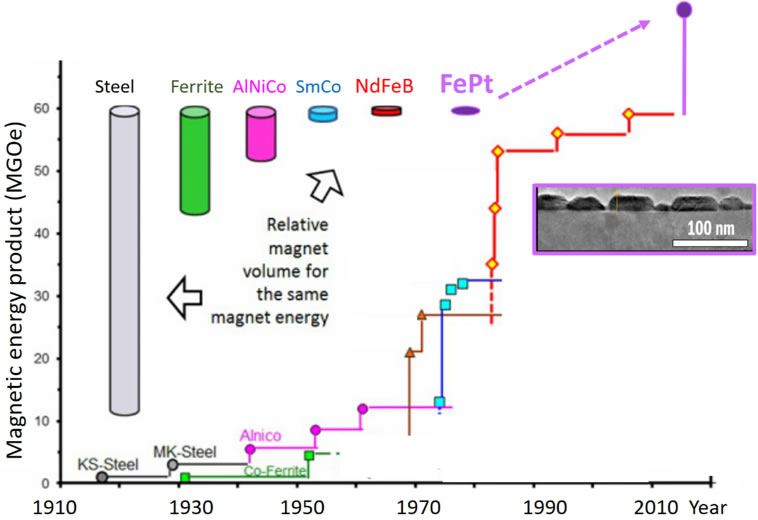

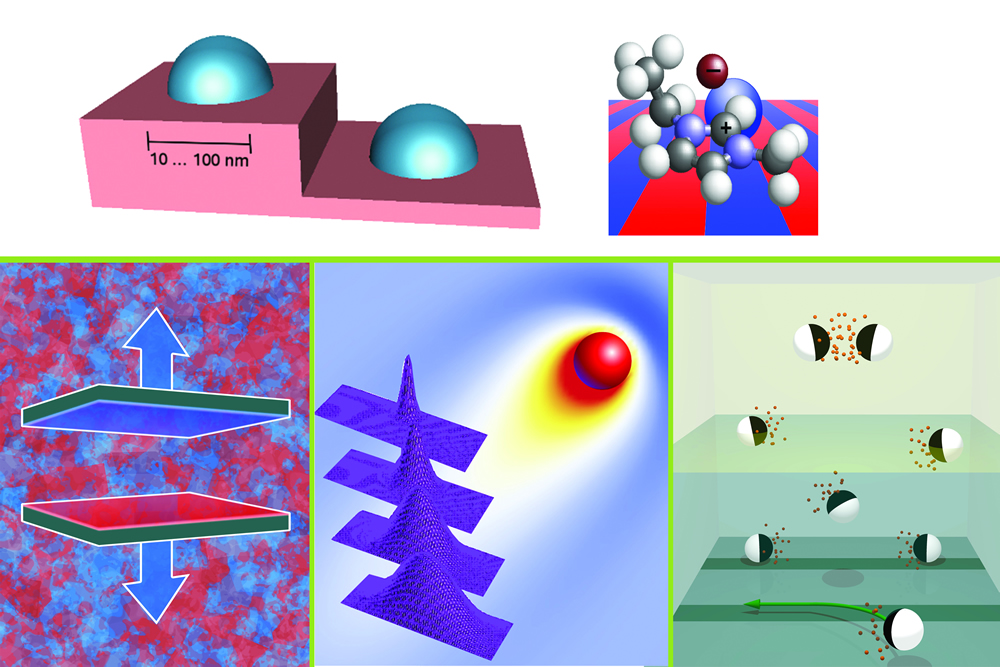

The research of the Modern Magnetic Systems department is dedicated to exploring nano magnetic structures, developing nano and micro sized novel devices, and understanding their spin dynamics. Hereby, the application and the steady improvement of X-ray-based imaging techniques play an essential role. By utilizing advanced nanoprinting techniques, the department’s researchers developed novel plastic X-ray lenses with nanometer-sized features and excellent focusing capabilities. For this and other projects, the team uses the X-ray microscope MAXYMUS, located at BESSY II, an 80 meter-wide synchrotron radiation source at the Helmholtz Center Berlin, through which even the smallest structures can be made more visible. Another research focus is the development of novel supermagnets at the nano- to micrometer-scale, which far exceed the performance of conventional magnets.

Physical Intelligence

Christoph Keplinger (Acting Director)

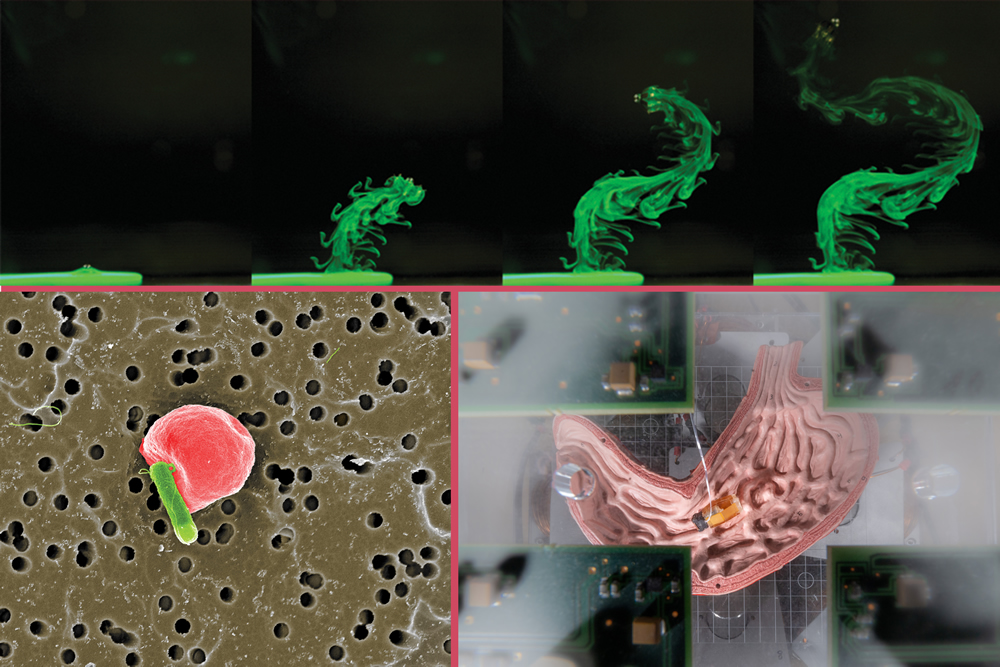

When developing small-scale mobile robots made of smart and soft materials, the Physical Intelligence team looks to nature for inspiration. The built-in physical intelligence of biological systems serves as a model for micro- and milli-machines. Due to their small size, the intelligence of such robots is mainly rooted in their physical design, the material used, and their ability to adapt and self-organize – rather than in their inherently limited computation, actuation, powering, perception, and control capabilities. The team focuses on medical applications of these novel small-scale robotic systems to revolutionize the healthcare technologies of the future by enabling unprecedented minimally invasive medical interventions inside the human body.

Robotic Materials

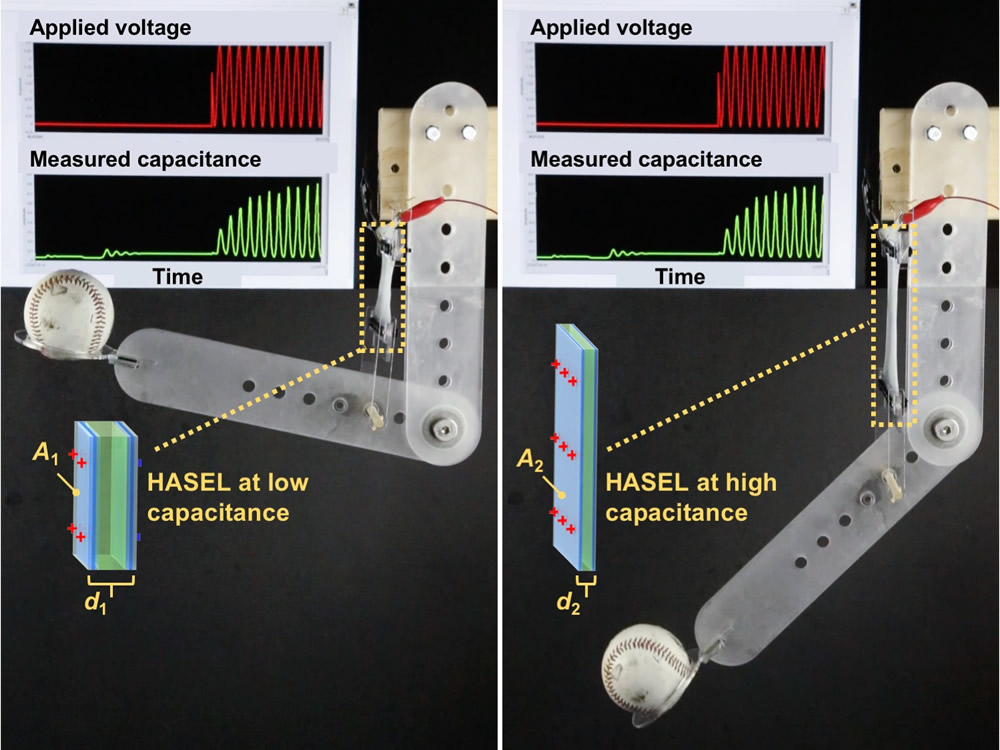

Christoph Keplinger

The “Robotic Materials” department pursues research on soft robotics, functional polymers and energy capture – three interrelated areas that are critical to robotic materials, a new class of material systems that tightly integrate actuation, sensing and even computation to provide physical building blocks for the intelligent systems of the future.

Theory of Inhomogeneous Condensed Matter

Siegfried Dietrich

The research goal of the department Theory of Inhomogeneous Condensed Matter at the Max Planck Institute for Intelligent Systems as well as of the Chair for Theoretical Physics in personal union at the University of Stuttgart is directed towards relating macroscopic properties of condensed matter to the collective behavior of the underlying microscopic degrees of freedom. Based on statistical physics, the research focuses on systems that are inhomogeneous on mesoscopic length scales, encompassing interfaces as well as anisotropy and disorder. These systems exhibit a wealth of phenomena and can generate states of condensed matter which do not form in bulk materials, offering perspectives for useful applications.

Autonomous Motion

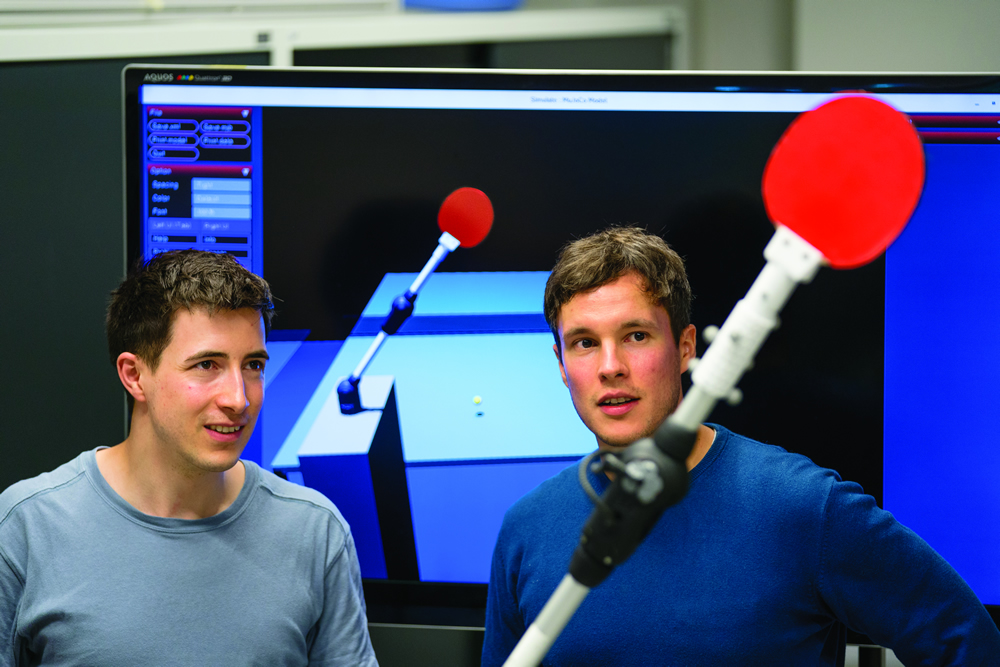

The Autonomous Motion Department has its focus on research in intelligent systems that can move, perceive, and learn from experiences.

We are interested in understanding, how autonomous movement systems can bootstrap themselves into competent behavior by starting from a relatively simple set of algorithms and pre-structuring, and then learning from interacting with the environment. Using instructions from a teacher to get started can add useful prior information. Performing trial and error learning to improve movement skills and perceptual skills is another domain of our research. We are interested in investigating such perception-action-learning loops in biological systems and robotic systems, which can range in scale from nano systems (cells, nano-robots) to macro systems (humans, and humanoid robots).

Here you can additionally find information about Archived Departments and Research Groups (independent research group) that belonged to our institute for several years. Usually there is still a close scientific exchange between them and us, up to cooperation in scientific projects. The presence on our website shows the research activities of the groups during their time at MPI-IS.