Dissecting Adam: The Sign, Magnitude and Variance of Stochastic Gradients

2018

Conference Paper

pn

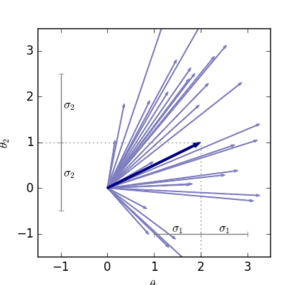

The ADAM optimizer is exceedingly popular in the deep learning community. Often it works very well, sometimes it doesn't. Why? We interpret ADAM as a combination of two aspects: for each weight, the update direction is determined by the sign of stochastic gradients, whereas the update magnitude is determined by an estimate of their relative variance. We disentangle these two aspects and analyze them in isolation, gaining insight into the mechanisms underlying ADAM. This analysis also extends recent results on adverse effects of ADAM on generalization, isolating the sign aspect as the problematic one. Transferring the variance adaptation to SGD gives rise to a novel method, completing the practitioner's toolbox for problems where ADAM fails.

| Author(s): | Balles, L. and Hennig, P. |

| Book Title: | Proceedings of the 35th International Conference on Machine Learning (ICML) |

| Volume: | 80 |

| Pages: | 404--413 |

| Year: | 2018 |

| Month: | July |

| Series: | Proceedings of Machine Learning Research |

| Editors: | Jennifer Dy and Andreas Krause |

| Publisher: | PMLR |

| Department(s): | Probabilistic Numerics |

| Research Project(s): |

Probabilistic Methods for Nonlinear Optimization

|

| Bibtex Type: | Conference Paper (conference) |

| Paper Type: | Conference |

| Event Name: | ICML |

| Event Place: | Stockholmsmässan, Stockholm Sweden |

| State: | Published |

| URL: | http://proceedings.mlr.press/v80/balles18a.html |

|

BibTex @conference{balles2018dissecting,

title = {Dissecting Adam: The Sign, Magnitude and Variance of Stochastic Gradients},

author = {Balles, L. and Hennig, P.},

booktitle = {Proceedings of the 35th International Conference on Machine Learning (ICML)},

volume = {80},

pages = {404--413},

series = {Proceedings of Machine Learning Research},

editors = {Jennifer Dy and Andreas Krause},

publisher = {PMLR},

month = jul,

year = {2018},

doi = {},

url = {http://proceedings.mlr.press/v80/balles18a.html},

month_numeric = {7}

}

|

|