Combining learned and analytical models for predicting action effects from sensory data

2020

Article

am

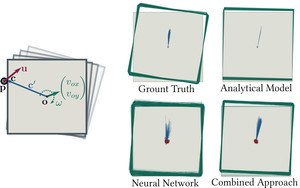

One of the most basic skills a robot should possess is predicting the effect of physical interactions with objects in the environment. This enables optimal action selection to reach a certain goal state. Traditionally, dynamics are approximated by physics-based analytical models. These models rely on specific state representations that may be hard to obtain from raw sensory data, especially if no knowledge of the object shape is assumed. More recently, we have seen learning approaches that can predict the effect of complex physical interactions directly from sensory input. It is however an open question how far these models generalize beyond their training data. In this work, we investigate the advantages and limitations of neural network based learning approaches for predicting the effects of actions based on sensory input and show how analytical and learned models can be combined to leverage the best of both worlds. As physical interaction task, we use planar pushing, for which there exists a well-known analytical model and a large real-world dataset. We propose to use a convolutional neural network to convert raw depth images or organized point clouds into a suitable representation for the analytical model and compare this approach to using neural networks for both, perception and prediction. A systematic evaluation of the proposed approach on a very large real-world dataset shows two main advantages of the hybrid architecture. Compared to a pure neural network, it significantly (i) reduces required training data and (ii) improves generalization to novel physical interaction.

| Author(s): | Alina Kloss and Stefan Schaal and Jeannette Bohg |

| Journal: | International Journal of Robotics Research |

| Year: | 2020 |

| Month: | September |

| Department(s): | Autonomous Motion |

| Bibtex Type: | Article (article) |

| Paper Type: | Journal |

| DOI: | 10.1177/0278364920954896 |

| State: | Published |

| URL: | https://journals.sagepub.com/doi/10.1177/0278364920954896 |

| Links: |

arXiv

|

| Attachments: |

pdf

|

|

BibTex @article{kloss_ijrr,

title = {Combining learned and analytical models for predicting action effects from sensory data},

author = {Kloss, Alina and Schaal, Stefan and Bohg, Jeannette},

journal = {International Journal of Robotics Research},

month = sep,

year = {2020},

doi = {10.1177/0278364920954896},

url = {https://journals.sagepub.com/doi/10.1177/0278364920954896},

month_numeric = {9}

}

|

|