2024

hi

L’Orsa, R., Bisht, A., Yu, L., Murari, K., Westwick, D. T., Sutherland, G. R., Kuchenbecker, K. J.

Reflectance Outperforms Force and Position in Model-Free Needle Puncture Detection

In Proceedings of the Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Orlando, USA, July 2024 (inproceedings) Accepted

ps

Fan, Z., Parelli, M., Kadoglou, M. E., Kocabas, M., Chen, X., Black, M. J., Hilliges, O.

HOLD: Category-agnostic 3D Reconstruction of Interacting Hands and Objects from Video

Proceedings IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2024 (conference) Accepted

ei

Gao, G., Liu, W., Chen, A., Geiger, A., Schölkopf, B.

GraphDreamer: Compositional 3D Scene Synthesis from Scene Graphs

The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2024 (conference) Accepted

ps

Chhatre, K., Daněček, R., Athanasiou, N., Becherini, G., Peters, C., Black, M. J., Bolkart, T.

AMUSE: Emotional Speech-driven 3D Body Animation via Disentangled Latent Diffusion

Proceedings IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2024 (conference) To be published

ei

Guo, S., Wildberger, J., Schölkopf, B.

Out-of-Variable Generalization for Discriminative Models

Proceedings of the Twelfth International Conference on Learning Representations (ICLR), May 2024 (conference) Accepted

ei

Pace, A., Yèche, H., Schölkopf, B., Rätsch, G., Tennenholtz, G.

Delphic Offline Reinforcement Learning under Nonidentifiable Hidden Confounding

Proceedings of the Twelfth International Conference on Learning Representations (ICLR), May 2024 (conference) Accepted

ei

Meterez*, A., Joudaki*, A., Orabona, F., Immer, A., Rätsch, G., Daneshmand, H.

Towards Training Without Depth Limits: Batch Normalization Without Gradient Explosion

Proceedings of the Twelfth International Conference on Learning Representations (ICLR), May 2024, *equal contribution (conference) Accepted

ei

al

Spieler, A., Rahaman, N., Martius, G., Schölkopf, B., Levina, A.

The Expressive Leaky Memory Neuron: an Efficient and Expressive Phenomenological Neuron Model Can Solve Long-Horizon Tasks

Proceedings of the Twelfth International Conference on Learning Representations (ICLR), May 2024 (conference) Accepted

ei

Open X-Embodiment Collaboration ( incl. Guist, S., Schneider, J., Schölkopf, B., Büchler, D. ).

Open X-Embodiment: Robotic Learning Datasets and RT-X Models

IEEE International Conference on Robotics and Automation (ICRA), May 2024 (conference) Accepted

ei

Jin, Z., Liu, J., Lyu, Z., Poff, S., Sachan, M., Mihalcea, R., Diab*, M., Schölkopf*, B.

Can Large Language Models Infer Causation from Correlation?

Proceedings of the Twelfth International Conference on Learning Representations (ICLR), May 2024, *equal supervision (conference) Accepted

ei

Donhauser, K., Lokna, J., Sanyal, A., Boedihardjo, M., Hönig, R., Yang, F.

Certified private data release for sparse Lipschitz functions

27th International Conference on Artificial Intelligence and Statistics (AISTATS), May 2024 (conference) Accepted

ei

ps

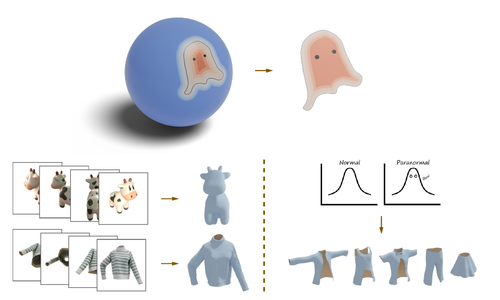

Liu, Z., Feng, Y., Xiu, Y., Liu, W., Paull, L., Black, M. J., Schölkopf, B.

Ghost on the Shell: An Expressive Representation of General 3D Shapes

In Proceedings of the Twelfth International Conference on Learning Representations, The Twelfth International Conference on Learning Representations, May 2024 (inproceedings) Accepted

ei

Schneider, J., Schumacher, P., Guist, S., Chen, L., Häufle, D., Schölkopf, B., Büchler, D.

Identifying Policy Gradient Subspaces

Proceedings of the Twelfth International Conference on Learning Representations (ICLR), May 2024 (conference) Accepted

al

Khajehabdollahi, S., Zeraati, R., Giannakakis, E., Schäfer, T. J., Martius, G., Levina, A.

Emergent mechanisms for long timescales depend on training curriculum and affect performance in memory tasks

In The Twelfth International Conference on Learning Representations, ICLR 2024, May 2024 (inproceedings)

ei

Khromov*, G., Singh*, S. P.

Some Intriguing Aspects about Lipschitz Continuity of Neural Networks

Proceedings of the Twelfth International Conference on Learning Representations (ICLR), May 2024, *equal contribution (conference) Accepted

ei

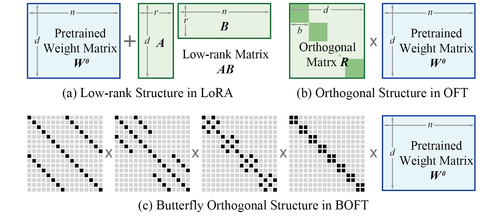

ps

Liu, W., Qiu, Z., Feng, Y., Xiu, Y., Xue, Y., Yu, L., Feng, H., Liu, Z., Heo, J., Peng, S., Wen, Y., Black, M. J., Weller, A., Schölkopf, B.

Parameter-Efficient Orthogonal Finetuning via Butterfly Factorization

In Proceedings of the Twelfth International Conference on Learning Representations, The Twelfth International Conference on Learning Representations, May 2024 (inproceedings) Accepted

ei

Pan, H., Schölkopf, B.

Skill or Luck? Return Decomposition via Advantage Functions

Proceedings of the Twelfth International Conference on Learning Representations (ICLR), May 2024 (conference) Accepted

ei

Imfeld*, M., Graldi*, J., Giordano*, M., Hofmann, T., Anagnostidis, S., Singh, S. P.

Transformer Fusion with Optimal Transport

Proceedings of the Twelfth International Conference on Learning Representations (ICLR), May 2024, *equal contribution (conference) Accepted

al

Gumbsch, C., Sajid, N., Martius, G., Butz, M. V.

Learning Hierarchical World Models with Adaptive Temporal Abstractions from Discrete Latent Dynamics

In The Twelfth International Conference on Learning Representations, ICLR 2024, May 2024 (inproceedings)

ei

Lorch, L., Krause*, A., Schölkopf*, B.

Causal Modeling with Stationary Diffusions

27th International Conference on Artificial Intelligence and Statistics (AISTATS), May 2024, *equal supervision (conference) Accepted

ei

al

Yao, D., Xu, D., Lachapelle, S., Magliacane, S., Taslakian, P., Martius, G., von Kügelgen, J., Locatello, F.

Multi-View Causal Representation Learning with Partial Observability

Proceedings of the Twelfth International Conference on Learning Representations (ICLR), May 2024 (conference) Accepted

ei

Theus, A., Geimer, O., Wicke, F., Hofmann, T., Anagnostidis, S., Singh, S. P.

Towards Meta-Pruning via Optimal Transport

Proceedings of the Twelfth International Conference on Learning Representations (ICLR), May 2024 (conference) Accepted

ei

Lin*, J. A., Padhy*, S., Antorán*, J., Tripp, A., Terenin, A., Szepesvari, C., Hernández-Lobato, J. M., Janz, D.

Stochastic Gradient Descent for Gaussian Processes Done Right

Proceedings of the Twelfth International Conference on Learning Representations (ICLR), May 2024, *equal contribution (conference) Accepted

ei

Hu, Y., Pinto, F., Yang, F., Sanyal, A.

PILLAR: How to make semi-private learning more effective

2nd IEEE Conference on Secure and Trustworthy Machine Learning (SaTML), April 2024 (conference) Accepted

hi

Mohan, M., Mat Husin, H., Kuchenbecker, K. J.

Expert Perception of Teleoperated Social Exercise Robots

In Proceedings of the ACM/IEEE International Conference on Human-Robot Interaction (HRI), pages: 769-773, Boulder, USA, March 2024, Late-Breaking Report (LBR) (5 pages) presented at the IEEE/ACM International Conference on Human-Robot Interaction (HRI) (inproceedings)

ps

Liao, T., Yi, H., Xiu, Y., Tang, J., Huang, Y., Thies, J., Black, M. J.

TADA! Text to Animatable Digital Avatars

In International Conference on 3D Vision (3DV 2024), 3DV 2024, March 2024 (inproceedings) Accepted

ps

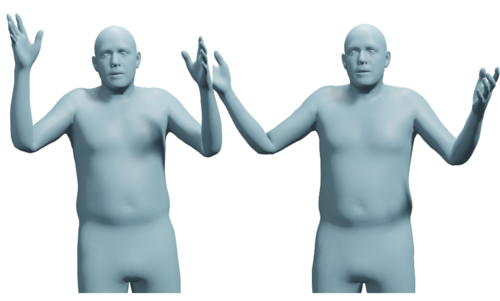

Dwivedi, S. K., Schmid, C., Yi, H., Black, M. J., Tzionas, D.

POCO: 3D Pose and Shape Estimation using Confidence

In International Conference on 3D Vision (3DV 2024), 3DV 2024, March 2024 (inproceedings)

ncs

ps

Zhang, H., Feng, Y., Kulits, P., Wen, Y., Thies, J., Black, M. J.

TECA: Text-Guided Generation and Editing of Compositional 3D Avatars

In International Conference on 3D Vision (3DV 2024), 3DV 2024, March 2024 (inproceedings) To be published

ncs

Kabadayi, B., Zielonka, W., Bhatnagar, B. L., Pons-Moll, G., Thies, J.

GAN-Avatar: Controllable Personalized GAN-based Human Head Avatar

In International Conference on 3D Vision (3DV), March 2024 (inproceedings)

ps

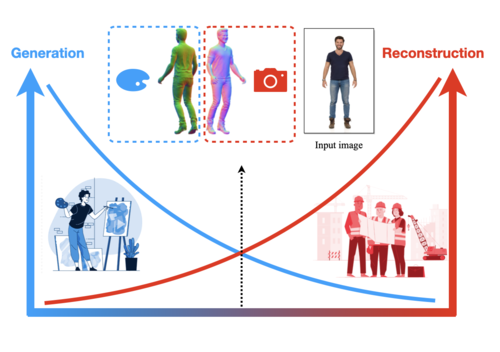

Huang, Y., Yi, H., Xiu, Y., Liao, T., Tang, J., Cai, D., Thies, J.

TeCH: Text-guided Reconstruction of Lifelike Clothed Humans

In International Conference on 3D Vision (3DV 2024), 3DV 2024, March 2024 (inproceedings) Accepted

ps

Zhang, H., Christen, S., Fan, Z., Zheng, L., Hwangbo, J., Song, J., Hilliges, O.

ArtiGrasp: Physically Plausible Synthesis of Bi-Manual Dexterous Grasping and Articulation

In International Conference on 3D Vision (3DV 2024), 3DV 2024, March 2024 (inproceedings) Accepted

ps

Ben-Dov, O., Gupta, P. S., Abrevaya, V., Black, M. J., Ghosh, P.

Adversarial Likelihood Estimation With One-Way Flows

In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), pages: 3779-3788, January 2024 (inproceedings)

ei

OS Lab

Kottapalli, S. N. M., Schlieder, L., Song, A., Volchkov, V., Schölkopf, B., Fischer, P.

Polarization-based non-linear deep diffractive neural networks

AI and Optical Data Sciences V, PC12903, pages: PC129030B, (Editors: Ken-ichi Kitayama and Volker J. Sorger), SPIE, January 2024 (conference)

ei

Song, A., Kottapalli, S. N. M., Schölkopf, B., Fischer, P.

Multi-channel free space optical convolutions with incoherent light

AI and Optical Data Sciences V, PC12903, pages: PC129030I, (Editors: Ken-ichi Kitayama and Volker J. Sorger), SPIE, January 2024 (conference)

ev

Kandukuri, R. K., Strecke, M., Stueckler, J.

Physics-Based Rigid Body Object Tracking and Friction Filtering From RGB-D Videos

In International Conference on 3D Vision (3DV), 2024, accepted, preprint arXiv: 2309.15703 (inproceedings) Accepted

ev

Li, H., Stueckler, J.

Online Calibration of a Single-Track Ground Vehicle Dynamics Model by Tight Fusion with Visual-Inertial Odometry

In Accepted for IEEE International Conference on Robotics and Automation (ICRA), 2024, accepted, preprint arXiv:2309.11148 (inproceedings) Accepted

2023

ei

Chaudhuri, A., Mancini, M., Akata, Z., Dutta, A.

Transitivity Recovering Decompositions: Interpretable and Robust Fine-Grained Relationships

Advances in Neural Information Processing Systems 36 (NeurIPS 2023), 37th Annual Conference on Neural Information Processing Systems, December 2023 (conference) Accepted

ei

Eastwood*, C., Singh*, S., Nicolicioiu, A. L., Vlastelica, M., von Kügelgen, J., Schölkopf, B.

Spuriosity Didn’t Kill the Classifier: Using Invariant Predictions to Harness Spurious Features

Advances in Neural Information Processing Systems 36 (NeurIPS 2023), 36, pages: 18291-18324, (Editors: A. Oh and T. Neumann and A. Globerson and K. Saenko and M. Hardt and S. Levine), Curran Associates, Inc., 37th Annual Conference on Neural Information Processing Systems, December 2023, *equal contribution (conference)

ei

Jin*, Z., Chen*, Y., Leeb*, F., Gresele*, L., Kamal, O., Lyu, Z., Blin, K., Gonzalez, F., Kleiman-Weiner, M., Sachan, M., Schölkopf, B.

CLadder: A Benchmark to Assess Causal Reasoning Capabilities of Language Models

Advances in Neural Information Processing Systems 36 (NeurIPS 2023), 36, pages: 31038-31065, (Editors: A. Oh and T. Neumann and A. Globerson and K. Saenko and M. Hardt and S. Levine), Curran Associates, Inc., 37th Annual Conference on Neural Information Processing Systems, December 2023, *main contributors (conference)

al

Kolev, P., Martius, G., Muehlebach, M.

Online Learning under Adversarial Nonlinear Constraints

In Advances in Neural Information Processing Systems 36, December 2023 (inproceedings)

ei

Gao*, R., Deistler*, M., Macke, J. H.

Generalized Bayesian Inference for Scientific Simulators via Amortized Cost Estimation

Advances in Neural Information Processing Systems 36 (NeurIPS 2023), 36, pages: 80191-80219, (Editors: A. Oh and T. Neumann and A. Globerson and K. Saenko and M. Hardt and S. Levine), Curran Associates, Inc., 37th Annual Conference on Neural Information Processing Systems, December 2023, *equal contribution (conference)

ei

Coda-Forno, J., Binz, M., Akata, Z., Botvinick, M., Wang, J. X., Schulz, E.

Meta-in-context learning in large language models

Advances in Neural Information Processing Systems 36 (NeurIPS 2023), 37th Annual Conference on Neural Information Processing Systems, December 2023 (conference) Accepted

ei

Kuznetsova*, R., Pace*, A., Burger*, M., Yèche, H., Rätsch, G.

On the Importance of Step-wise Embeddings for Heterogeneous Clinical Time-Series

Proceedings of the 3rd Machine Learning for Health Symposium (ML4H) , 225, pages: 268-291, Proceedings of Machine Learning Research, (Editors: Hegselmann, S.and Parziale, A. and Shanmugam, D. and Tang, S. and Asiedu, M. N. and Chang, S. and Hartvigsen, T. and Singh, H.), PMLR, December 2023, *equal contribution (conference)

ei

ps

Qiu*, Z., Liu*, W., Feng, H., Xue, Y., Feng, Y., Liu, Z., Zhang, D., Weller, A., Schölkopf, B.

Controlling Text-to-Image Diffusion by Orthogonal Finetuning

Advances in Neural Information Processing Systems 36 (NeurIPS 2023), 36, pages: 79320-79362, (Editors: A. Oh and T. Neumann and A. Globerson and K. Saenko and M. Hardt and S. Levine), Curran Associates, Inc., 37th Annual Conference on Neural Information Processing Systems, December 2023, *equal contribution (conference)

ei

Guo*, S., Tóth*, V., Schölkopf, B., Huszár, F.

Causal de Finetti: On the Identification of Invariant Causal Structure in Exchangeable Data

Advances in Neural Information Processing Systems 36 (NeurIPS 2023), 36, pages: 36463-36475, (Editors: A. Oh and T. Neumann and A. Globerson and K. Saenko and M. Hardt and S. Levine), Curran Associates, Inc., 37th Annual Conference on Neural Information Processing Systems, December 2023, *equal contribution (conference)

al

Sancaktar, C., Piater, J., Martius, G.

Regularity as Intrinsic Reward for Free Play

In Advances in Neural Information Processing Systems 37, December 2023 (inproceedings)

ei

Lin*, J. A., Antorán*, J., Padhy*, S., Janz, D., Hernández-Lobato, J. M., Terenin, A.

Sampling from Gaussian Process Posteriors using Stochastic Gradient Descent

Advances in Neural Information Processing Systems 36 (NeurIPS 2023), 36, pages: 36886-36912, (Editors: A. Oh and T. Neumann and A. Globerson and K. Saenko and M. Hardt and S. Levine), Curran Associates, Inc., 37th Annual Conference on Neural Information Processing Systems, December 2023, *equal contribution (conference)

al

Zadaianchuk, A., Seitzer, M., Martius, G.

Object-Centric Learning for Real-World Videos by Predicting Temporal Feature Similarities

In Thirty-seventh Conference on Neural Information Processing Systems (NeurIPS 2023), Advances in Neural Information Processing Systems 36, December 2023 (inproceedings)

ei

Salewski, L., Alaniz, S., Rio-Torto, I., Schulz, E., Akata, Z.

In-Context Impersonation Reveals Large Language Models’ Strengths and Biases

Advances in Neural Information Processing Systems 36 (NeurIPS 2023), 37th Annual Conference on Neural Information Processing Systems, December 2023 (conference) Accepted

ei

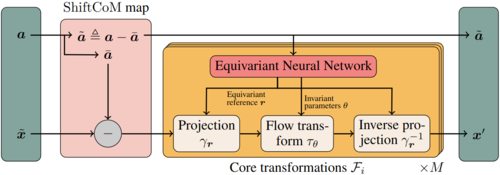

Midgley*, L. I., Stimper*, V., Antorán*, J., Mathieu*, E., Schölkopf, B., Hernández-Lobato, J. M.

SE(3) Equivariant Augmented Coupling Flows

Advances in Neural Information Processing Systems 36 (NeurIPS 2023), 36, pages: 79200-79225, (Editors: A. Oh and T. Neumann and A. Globerson and K. Saenko and M. Hardt and S. Levine), Curran Associates, Inc., 37th Annual Conference on Neural Information Processing Systems, December 2023, *equal contribution (conference)